You probably think they are the same thing. They are not. And if you are a security practitioner or a strategic thinker, the difference is not academic, it is operational.

What most people get wrong

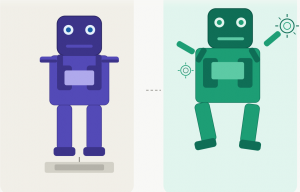

An AI agent is a component. It is a model that perceives input and produces output. Think of it as an employee who waits to be told what to do. You give it a task, it responds. That is the extent of its world.

Agentic AI is a behavior. It is when that same model, or a network of models, starts making decisions, takes sequences of actions, and interacts with tools, systems, and environments autonomously. It does not wait. It reasons, acts, and adapts.

A hammer and a carpenter are not the same thing, even if both are in the toolbox.”

One is a tool. The other is agency over the work.

Why this matters to security

When you think about an AI agent, your instinct is to evaluate it like software. Input, output, scope, permission. Classic controls apply.

When you encounter agentic AI, you are no longer evaluating a tool. You are evaluating behavior in motion. Scope changes mid-task. Permissions that looked safe become a chain. One decision leads to the next without a human checkpoint.

This is not a vulnerability in the traditional sense. It is a governance gap. And most environments are not ready for it, not because the technology is obscure, but because the team managing it still thinks in agent terms while the system operates in agentic terms.

You designed a fence for a dog, but what you have now is a dog that learned to open the gate.”

The strategic implication

Security strategy built on the assumption that AI is always waiting for instructions is already outdated. Agentic AI in early 2025 is not a future concern. It is in your pipelines, your automation stacks, your vendor tools, right now. The question is whether the people responsible for securing the environment understand what they are actually dealing with.

Get the definition right first. Everything else follows from that.