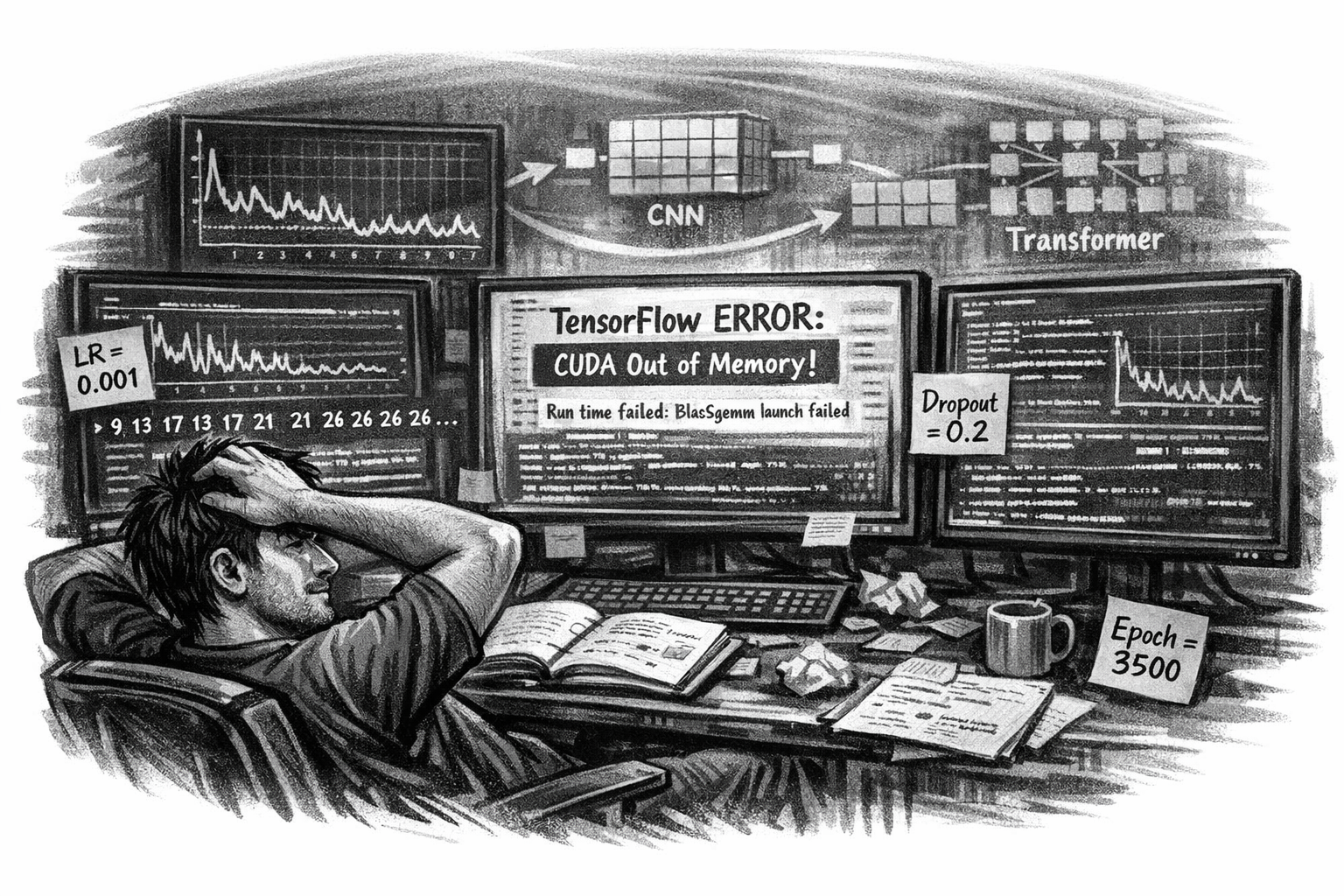

Where Curiosity Meets Chaos

These days my machine learning experimental project is deep in the trenches. I’m no longer chasing novelty, I’m chasing stability. Every run, every epoch, every tensor feels like a conversation with the machine that refuses to answer clearly.

The RNN repeats itself. The dropout layers don’t help. The learning rate oscillates between optimism and despair. I’m watching numbers loop like déjà vu — 900,1347,-1717,21,226,00081, as if the model is mocking me.

The Struggle of Parameters

This stage isn’t glamorous. It’s about patience and precision. I’m testing:

- Learning rates from 1 to 1e-6. Learning rate schedules, the tempo of patience; too high, and the model forgets; too low, and it never learns.

- Dropouts that erase too much or too little. Dropout layers, the controlled amnesia that teaches resilience.

- Batch sizes that change the rhythm of training. Batch normalization, the quiet stabilizer that keeps the orchestra in tune.

- Validation splits that distort the balance between learning and forgetting

- Activation layers that decide whether the model breathes or suffocates

- SGD (Stochastic Gradient Descent) is fast but volatile, like a heartbeat that never settles.

- Adam is well adaptive, forgiving, but sometimes too confident.

- RMSProp smooths the chaos, yet hides the spikes that matter.

- Leaky ReLU lets the negatives breathe, but sometimes leaks too much.

- Sigmoid is elegant, but suffocates gradients when pushed too far.

- Tanh is balanced, but fragile under deep recursion.

- Softmax, the diplomat, turning raw outputs into probabilities that pretend to make sense.

- L2 regularization, the discipline that prevents indulgence.

- Early stopping, the mercy rule when obsession goes too far.

Every adjustment feels like surgery on a living organism. Too much regularization, and it forgets. Too little, and it hallucinates.

The Repetition Problem

The model keeps predicting the same sequences. It’s not random. It’s not learning. It’s memorizing. I’ve tried shuffling, noise injection, teacher forcing, reversed sequences, even GPU acceleration. CUDA breaks. TensorFlow stalls. The tokens stay loyal to their pattern.

It’s maddening and fascinating at once. The machine is consistent, just not intelligent yet.

Decision Trees: The Detour

When the RNN refused to evolve, I pivoted. Decision Trees, a simpler, more interpretable architecture. Suddenly, randomness appeared. Loss dropped. Predictions looked alive.

Epochs stretched into hundreds, then thousands. Loss values danced between 7.21 and 24.72. For the first time, the model produced packet sequences that matched historical logs. It wasn’t luck, it was structure emerging from chaos.

The Human Side of Debugging

This phase is emotional. Every failed run feels personal. Every improvement feels earned. I’m not just tuning hyperparameters, I’m negotiating with uncertainty. I’m learning how fragile “learning” really is.

“Understanding the failure is the first sign of progress.”

The Reality of Mid‑Project Work

This is the part nobody writes about. The endless logs. The broken CUDA installs. The validation splits that ruin everything. The moments when the model predicts yesterday’s numbers and you wonder if it’s mocking you or teaching you.

But this is where real research happens, not in the results, but in the resistance 😀